CollectorVision: Identifying Trading Cards with On-Device Machine Learning

I've been working on a card scanner for Magic: The Gathering for a while now and wanted to write up how it works.

The short version: you show it a card, it tells you what it is. It handles held cards, sleeved cards, skewed angles, phone cameras. The whole thing runs locally — you can run it in Python on your laptop, or as a static web app in your browser. No server required. The live demo is at https://hanclinto.github.io/CollectorVision/.

The project is called CollectorVision. The code is at https://github.com/HanClinto/CollectorVision.

How it works

The pipeline has three steps.

Step 1 — find the card corners. A small neural network (Cornelius) looks at the frame and predicts where the four corners of the card are. It outputs normalized (x, y) coordinates for each corner, plus a sharpness score indicating confidence. Low sharpness means the frame was blurry or there was no card in view — those frames get skipped.

Step 2 — flatten the card. Given four corners, a perspective transform maps the card to a flat rectangle: 252 × 352 pixels, every time, regardless of how the card was held. This is the canonical crop.

Step 3 — embed and search. A second network (Milo) converts the flat crop to a 128-number vector. That vector gets compared against a prebuilt catalog of ~108,000 reference vectors, one per card face, using a dot product. The closest match is the result.

The whole pipeline runs in under 100ms on a laptop CPU. Milo and Cornelius are both exported to ONNX, so the only ML dependency is onnxruntime — no PyTorch, no GPU.

Pictures

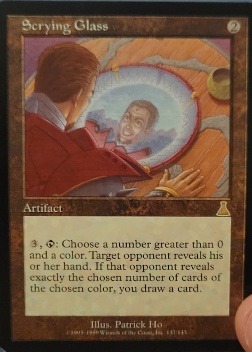

Here is a real-world photo of a card:

Cornelius finds the four corners:

The perspective is corrected to a flat crop:

Milo produces a 128-d embedding. The nearest neighbor in the catalog is Scrying Glass, Urza's Destiny, cosine similarity 0.93.

Installing

For now, install directly from GitHub:

pip install git+https://github.com/HanClinto/CollectorVision.git

Five lines to identify a card:

import cv2

import collector_vision as cvg

catalog = cvg.Catalog.load("hf://HanClinto/milo/scryfall-mtg")

image = cv2.imread("my_card.jpg")

detection = cvg.NeuralCornerDetector().detect(image)

crop = detection.dewarp(image)

emb = catalog.embedder.embed(crop)

score, card_id = catalog.search(emb)[0]

print(card_id, score)

The catalog (~29 MB) downloads once from HuggingFace on first run and is cached after that. Everything runs offline.

The series

This is the first post in a series going through the details.

- This post — overview

- Cornelius: the corner detector

- Milo: the embedding model

- Running it locally — Python library, REST server

- The browser scanner

- The hybrid split — local inference, server lookup

The interesting parts are in posts 2 and 3. The browser scanner post (5) covers some unusual engineering problems around running ONNX models in WebAssembly and a bug in WebGPU on Android ARM that cost a few days.

0 Comments

No comments yet. Be the first!

You'll need a GitHub account. Your comment will appear here on the next site rebuild.